Categorical Flow Maps

Authors: Daan Roos, Oscar Davis, Floor Eijkelboom, Michael Bronstein, Max Welling, İsmail İlkan Ceylan, Luca Ambrogioni, Jan-Willem van de Meent

Paper: https://arxiv.org/abs/2602.12233

Code: N/A

Model: N/A

Affiliation: UvA-Bosch Delta Lab, University of Oxford, AITHYRA, TU Wien, Radboud University

TL;DR

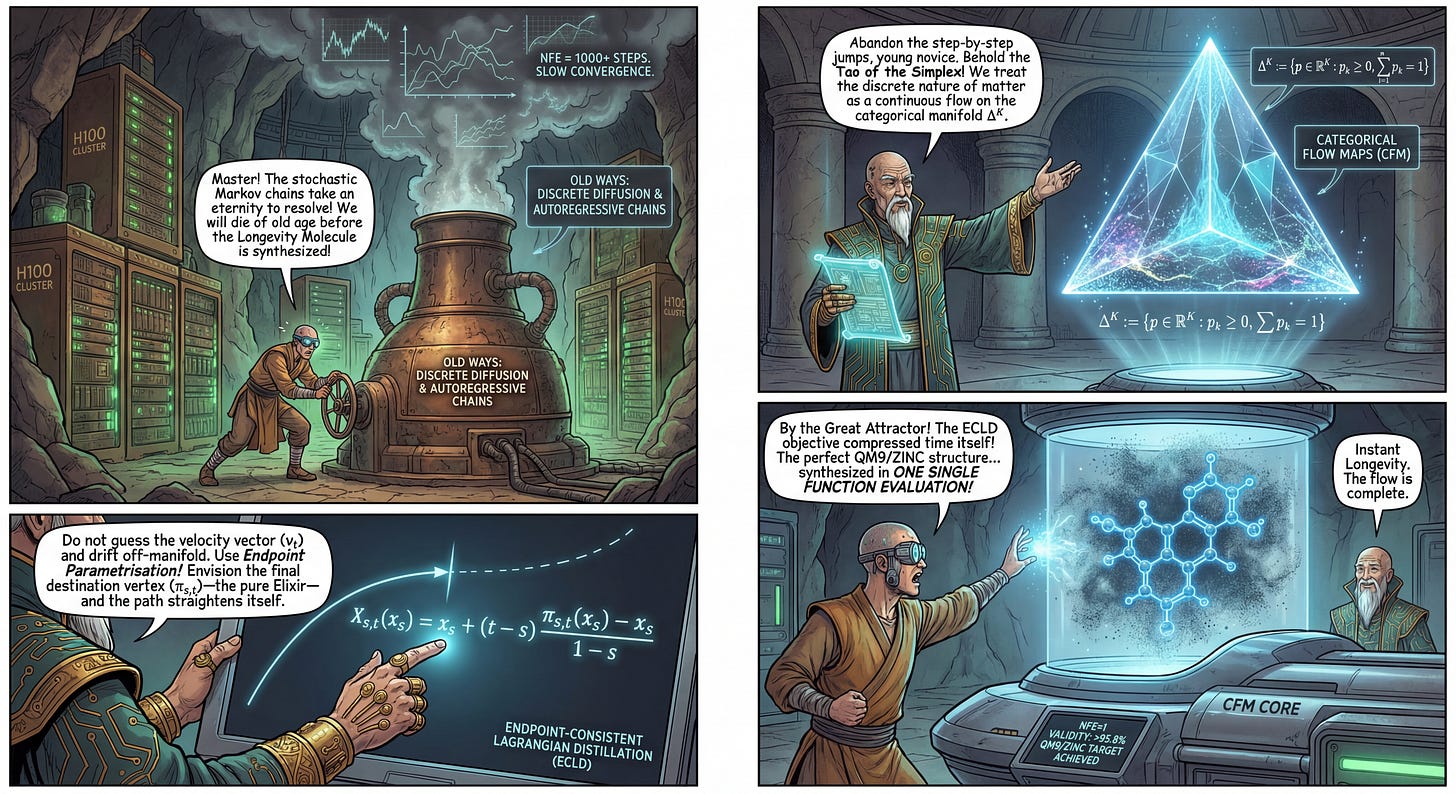

WHAT was done? The authors introduce Categorical Flow Maps (CFM), a method for training continuous-time generative flow models on the probability simplex to produce discrete data such as text, molecular graphs, and images. They propose a novel endpoint-based parametrisation that rigorously respects the geometry of the simplex and a corresponding self-distillation objective, Endpoint-Consistent Lagrangian Distillation (ECLD). This framework enables high-quality generation in as few as one or two steps.

WHY it matters? While continuous diffusion models have successfully moved to few-step generation via consistency distillation, discrete modalities have lagged behind, often relying on computationally expensive autoregressive loops or high-step discrete diffusion chains. CFM provides a mathematically grounded framework to apply flow matching and self-distillation to discrete data, achieving State-of-the-Art (SOTA) results for single-step generation on molecular graphs (QM9, ZINC) and competitive perplexity on text benchmarks (Text8, LM1B).

Details

The Discrete Inference Bottleneck

The current generative landscape is bifurcated. In the continuous domain of images and audio, techniques like Consistency Models and Rectified Flows have successfully compressed inference from hundreds of steps down to one or two. However, discrete data—such as language, graphs, and quantized images—remains stubbornly expensive to generate. Autoregressive models scale linearly with sequence length, and discrete diffusion models like D3PM often require many steps to resolve the stochasticity of Markov chains on discrete states. Previous attempts to apply flow matching to discrete data often treated the data as continuous embeddings without strictly enforcing the geometry of the probability distribution, or they struggled to apply self-distillation effectively due to the discontinuous nature of discrete transitions.

The authors of Categorical Flow Maps address this by framing the generation of discrete data not as a jump between discrete states, but as a continuous flow of probability mass on the simplex. By defining the transport problem on this continuous substrate, they unlock the arsenal of flow matching and distillation techniques previously reserved for continuous Euclidean data.