Compute Optimal Tokenization

Authors: Tomasz Limisiewicz, Artidoro Pagnoni, Srini Iyer, Mike Lewis, Sachin Mehta, Alisa Liu, Margaret Li, Gargi Ghosh, Luke Zettlemoyer

Paper: https://arxiv.org/abs/2605.01188v1

Code: https://co-tok.github.io

TL;DR

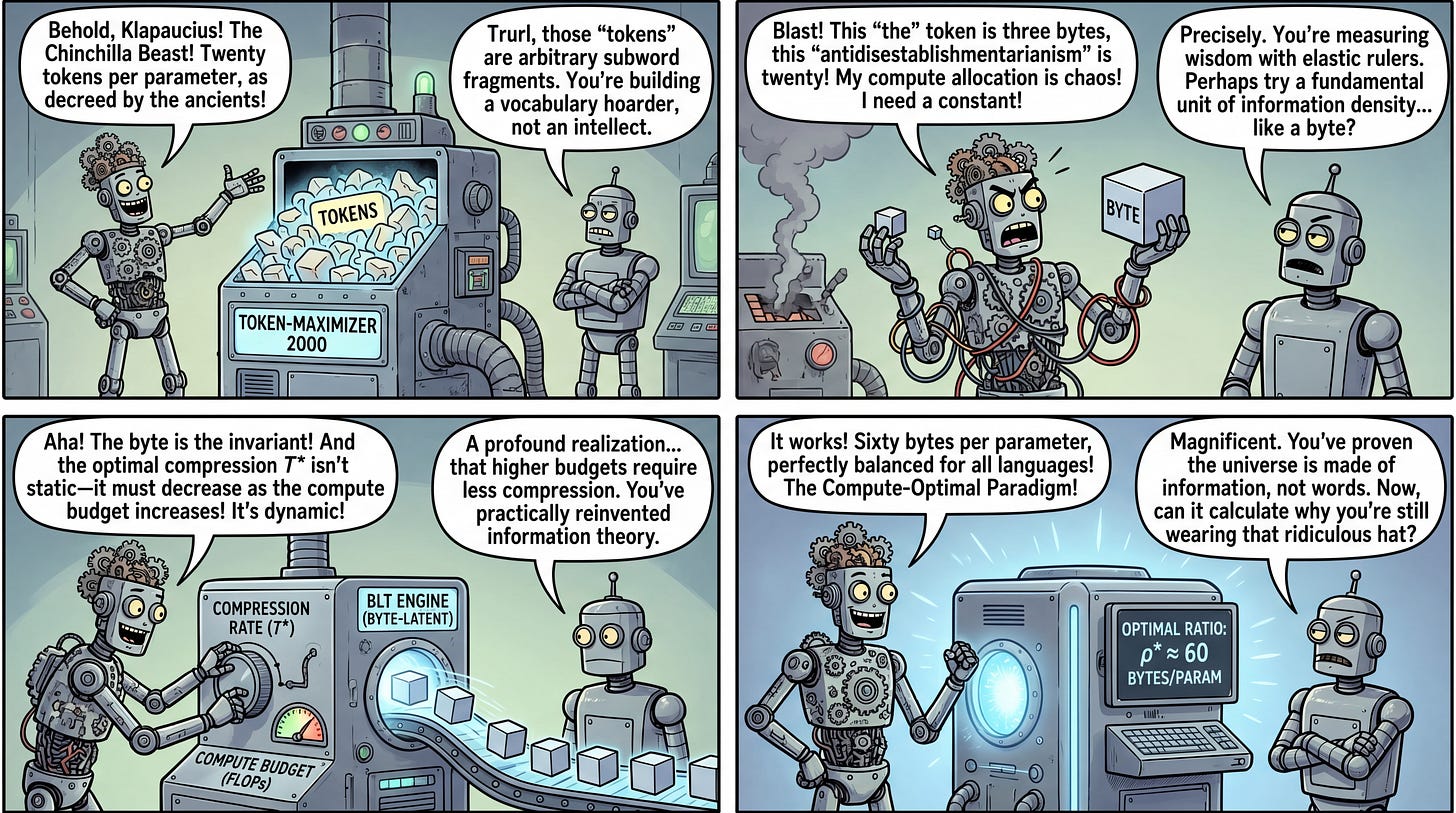

WHAT was done? The authors systematically derived compression-aware neural scaling laws by training nearly 1,300 models to determine how information granularity (bytes per token) impacts optimal compute allocation.

WHY it matters? This work proves that the widely accepted heuristic of scaling models by 20 tokens per parameter is an artifact of specific subword tokenizers. Establishing a tokenizer-agnostic scaling law based on bytes provides a robust framework for maximizing compute efficiency across diverse languages and modalities.

Executive summary: For research teams optimizing large-scale pre-training runs, the tokenization scheme is often treated as a static preprocessing step. This paper reframes tokenization as a dynamic scaling variable. By optimizing the “compression rate” (information density), the authors demonstrate that training data should scale proportionally to model parameters in bytes, not tokens. Furthermore, they reveal that the optimal compression rate is compute-dependent, requiring lower compression as FLOP budgets scale up, thus offering a new blueprint for training highly efficient, massively multilingual foundation models.

Details

The Tokenization Artifact Bottleneck in Neural Scaling

Foundation model scaling is largely governed by established scaling laws, most notably the heuristic derived in Training Compute-Optimal Large Language Models (Chinchilla), which posits an optimal ratio of approximately 20 training tokens per model parameter. However, a critical blind spot in this heuristic is its reliance on a fixed tokenization scheme. Expressing data volume strictly in tokens ignores the variable information density that each token represents, essentially binding fundamental scaling behavior to the arbitrary mechanics of Byte-Pair Encoding (BPE) tokenizers. This study isolates the token as a variable to identify the true invariant in scaling behavior, exposing the extent to which popular tokenizers inherently skew compute allocation.