Discovering Multiagent Learning Algorithms with Large Language Models

Authors: Zun Li, John Schultz, Daniel Hennes, Marc Lanctot

Paper: https://arxiv.org/abs/2602.16928

Code: N/A

Model: N/A

Affiliation: Google DeepMind

TL;DR

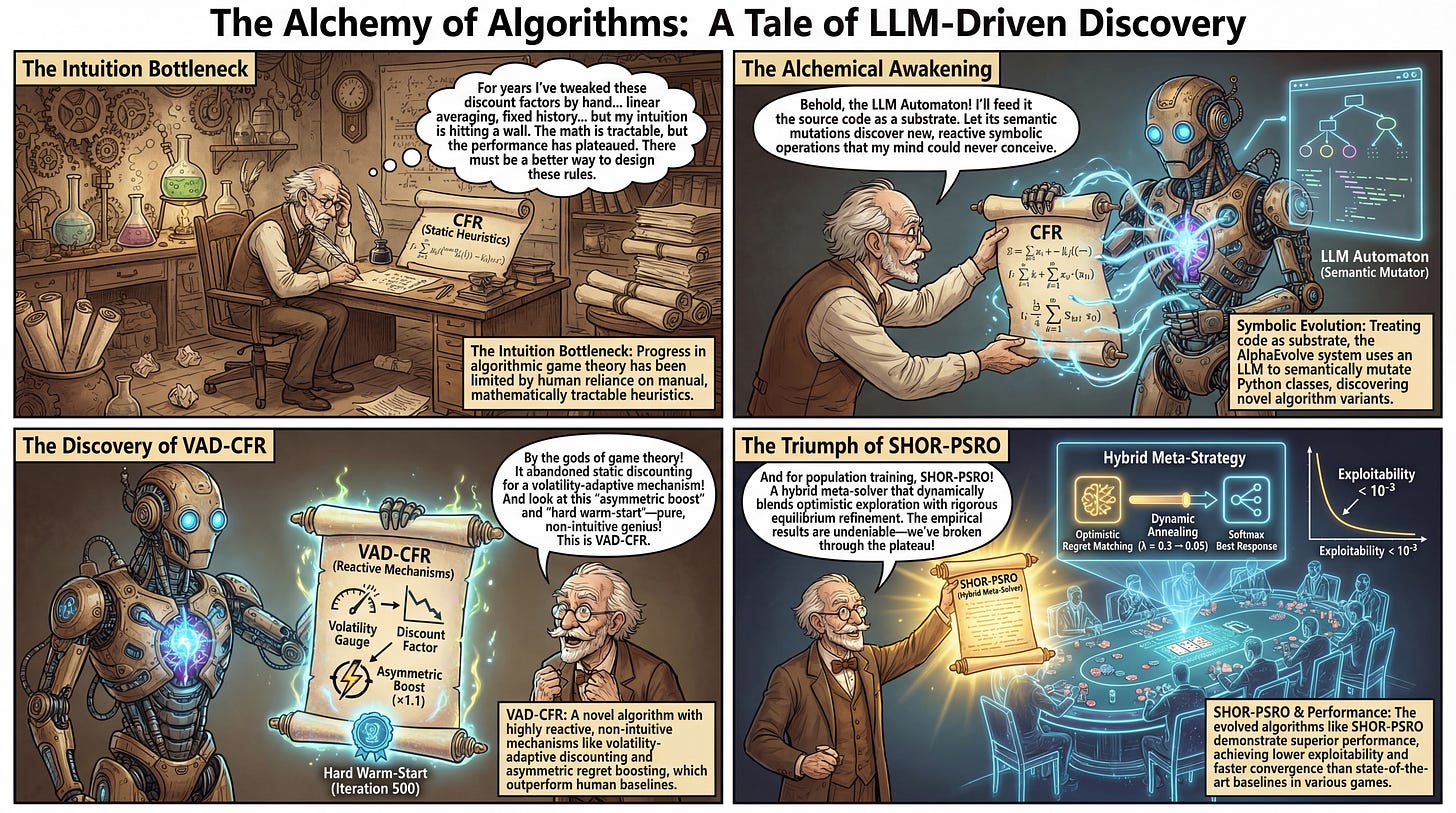

WHAT was done? The authors deployed an LLM-driven evolutionary coding system, AlphaEvolve, to automatically discover entirely new algorithm variants for Multi-Agent Reinforcement Learning (MARL). By semantically mutating Python source code, the system discovered novel, non-intuitive extensions to both Counterfactual Regret Minimization (CFR) and Policy Space Response Oracles (PSRO).

WHY it matters? Progress in algorithmic game theory has historically been bottlenecked by human intuition, relying on manual trial-and-error to find mathematically tractable heuristics for regret discounting or meta-strategy blending. This research demonstrates that treating algorithm design as a symbolic search problem yields highly effective, reactive mechanisms—such as volatility-adaptive discounting and asymmetric regret boosting—that significantly outperform state-of-the-art human-designed baselines.

Details

The Intuition Bottleneck in Algorithmic Game Theory

For over a decade, the practical advancement of imperfect-information game solvers has heavily relied on iterative, manual refinements. Foundational frameworks like Counterfactual Regret Minimization (CFR) and Policy Space Response Oracles (PSRO) rest on solid theoretical guarantees, but achieving state-of-the-art empirical performance requires careful structural choices. Researchers have spent years hand-crafting modifications—such as linear averaging in CFR+ or fixed historical discounting in Discounted CFR (DCFR)—to accelerate convergence. However, human designers naturally default to mathematically tractable, static heuristics. Navigating the combinatorial space of potential update rules using human intuition alone has become a severe bottleneck. The authors of this paper proposed shifting the paradigm entirely: instead of manually deriving regret bounds to justify heuristic tweaks, they utilized Large Language Models (LLMs) to automatically evolve the semantic logic of the algorithms themselves.