Efficient Universal Perception Encoder

Authors: Chenchen Zhu, Saksham Suri, Cijo Jose, Maxime Oquab, Marc Szafraniec, Wei Wen, Yunyang Xiong, Patrick Labatut, Piotr Bojanowski, Raghuraman Krishnamoorthi, Vikas Chandra

Paper: https://arxiv.org/abs/2603.22387v1

Code: N/A

Model: N/A

TL;DR

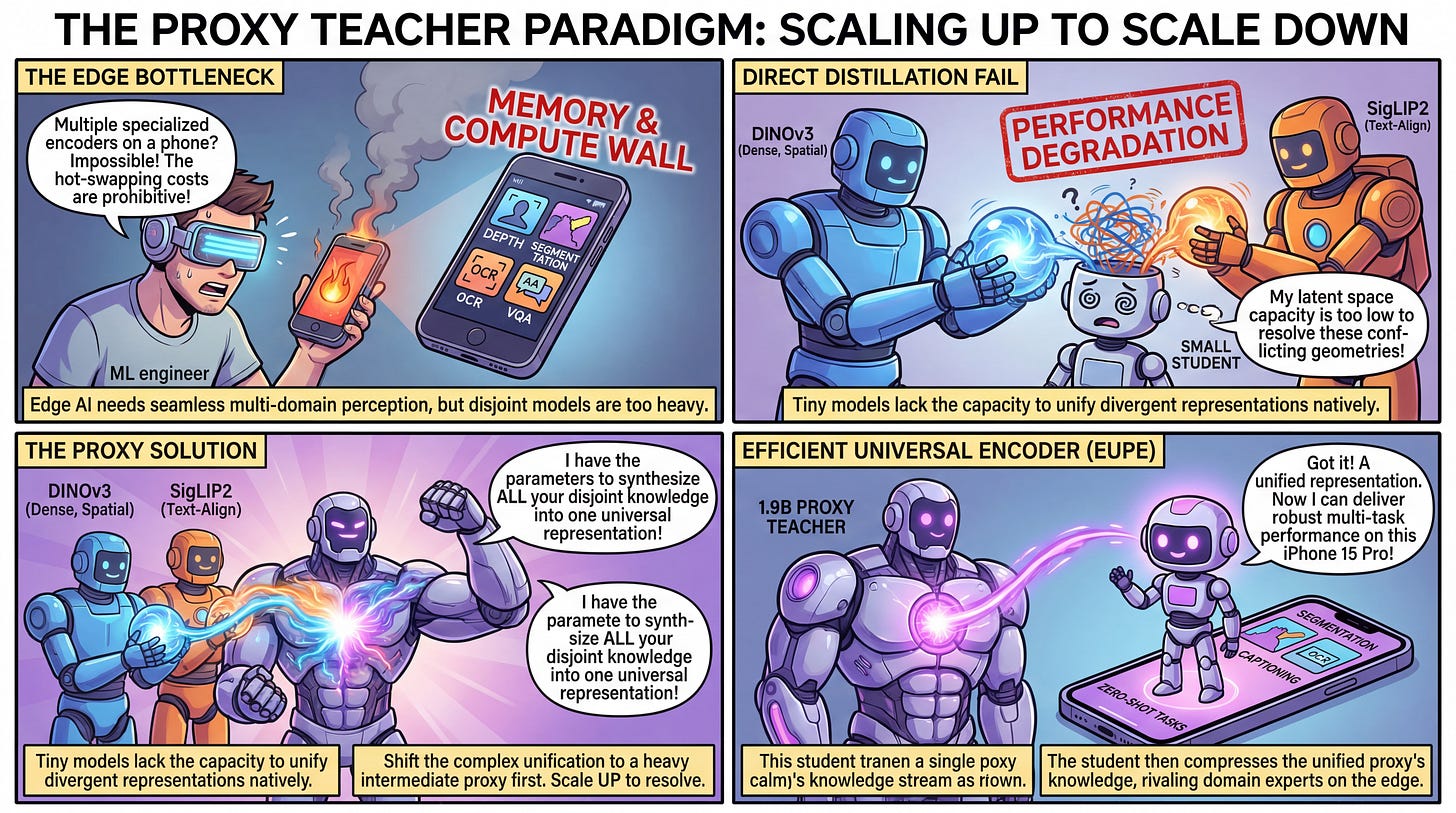

WHAT was done? The paper introduces the Efficient Universal Perception Encoder (EUPE), a three-stage distillation pipeline that creates a compact vision encoder capable of robust zero-shot performance across image understanding, dense prediction, and vision-language tasks. Rather than distilling multiple domain-expert models directly into a small student, the authors first distil the experts into a massive 1.9-billion parameter “proxy teacher,” which then teaches the efficient student model.

WHY it matters? Deploying multimodal foundation models on edge devices typically requires hot-swapping specialized encoders (e.g., one for depth, another for OCR), incurring prohibitive memory and compute costs. By proving that efficient backbones inherently lack the parameter capacity to unify divergent expert representations natively, this research establishes an intermediate aggregation step as a mandatory structural bridge for creating highly capable, multi-task mobile architectures.

Executive summary: For edge AI to achieve seamless, multi-domain perception, relying on disjoint foundation models is computationally unfeasible. The authors reveal that existing methods of agglomerating multiple teachers directly into a small student fail because tiny models cannot resolve conflicting latent geometries. By shifting the complex task of knowledge unification to a heavy intermediate proxy model, and only then compressing that single, unified representation into a lightweight backbone, the resulting model rivals domain-specific experts of the same size across all key vision benchmarks.