Fast Byte Latent Transformer

Authors: Julie Kallini, Artidoro Pagnoni, Tomasz Limisiewicz, Gargi Ghosh, Luke Zettlemoyer, Christopher Potts, Xiaochuang Han, Srinivasan Iyer

Paper: https://arxiv.org/abs/2605.08044v1

Code: N/A

Model: N/A

TL;DR

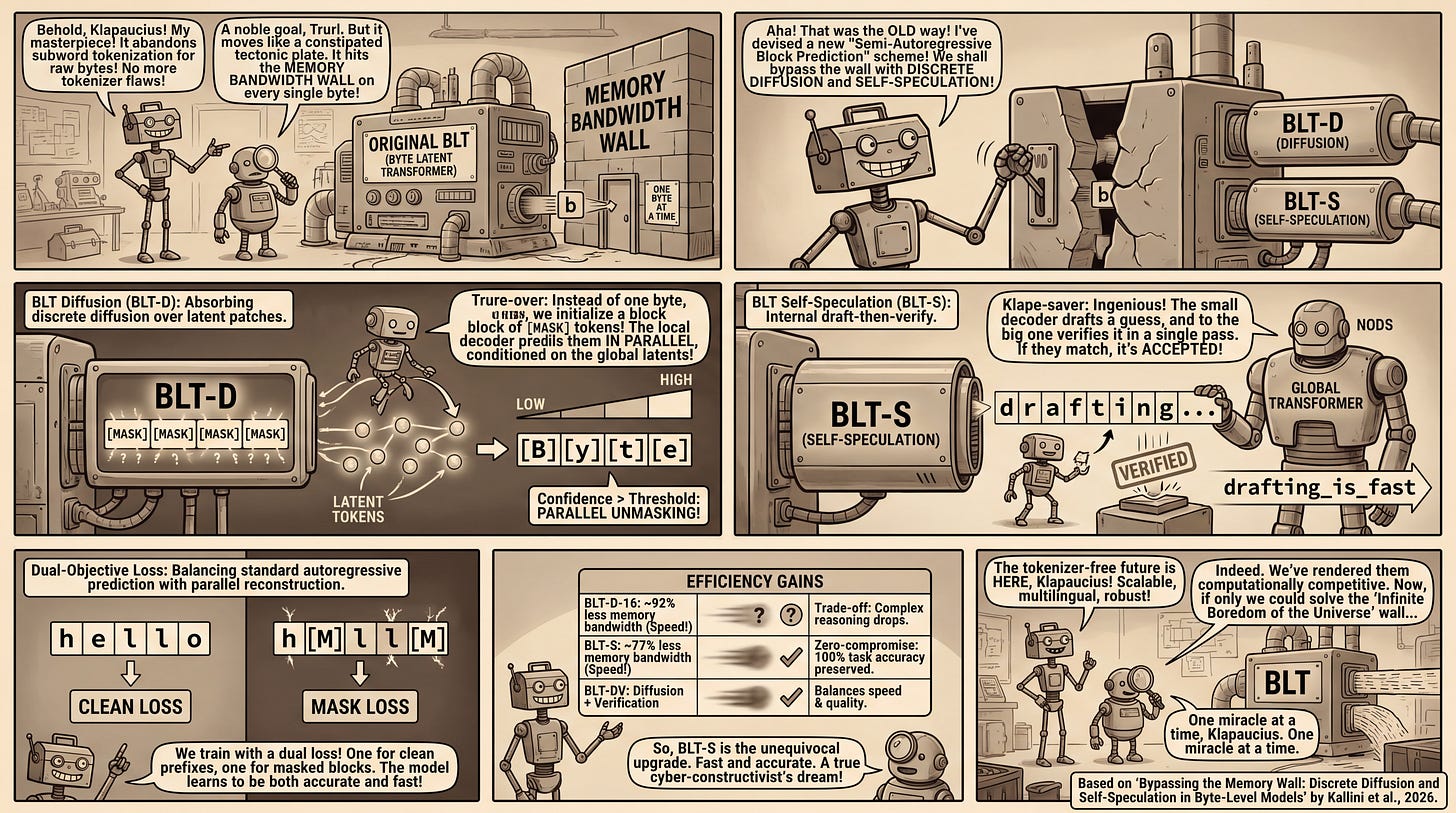

WHAT was done? The authors introduce three novel generation techniques—BLT Diffusion (BLT-D), BLT Self-speculation (BLT-S), and BLT Diffusion+Verification (BLT-DV)—to enable parallel byte decoding in hierarchical language models. By utilizing block-wise discrete diffusion and internal self-speculation, they bypass strict byte-by-byte autoregressive bottlenecks.

WHY it matters? Byte-level architectures inherently resolve subword tokenization flaws (like adversarial fragility and multilingual disparity) but have been crippled by slow inference speeds. By dramatically reducing memory bandwidth costs—by up to 92% in some configurations—these techniques render tokenizer-free foundation models computationally competitive for real-world deployment.

Details

The Memory Bandwidth Wall in Tokenizer-Free Architectures

To understand the significance of this work, we must first look at the baseline: the original Byte Latent Transformer [human review]. The original BLT was a conceptual leap because it abandoned subword tokenization in favor of raw byte processing, solving the severe compute overhead of byte-level models via dynamic entropy-based patching. Instead of running a massive global transformer on every byte, the original architecture grouped highly predictable bytes into long patches and high-entropy bytes into short patches, compressing them into latent representations. However, while the original BLT elegantly solved the compute bottleneck during training, it collided with a different wall during inference: memory bandwidth. Because the local decoder in the original BLT still generates output sequentially, one byte at a time, the system must repeatedly load massive global model weights and access key-value caches for every single subword equivalent. The current paper structurally resolves this sequential decoding bottleneck without sacrificing the original model’s dynamic patching.