Authors: Jenny Zhang, Bingchen Zhao, Wannan Yang, Jakob Foerster, Jeff Clune, Minqi Jiang, Sam Devlin, Tatiana Shavrina

Paper: https://arxiv.org/abs/2603.19461

Code: https://github.com/facebookresearch/Hyperagents

TL;DR

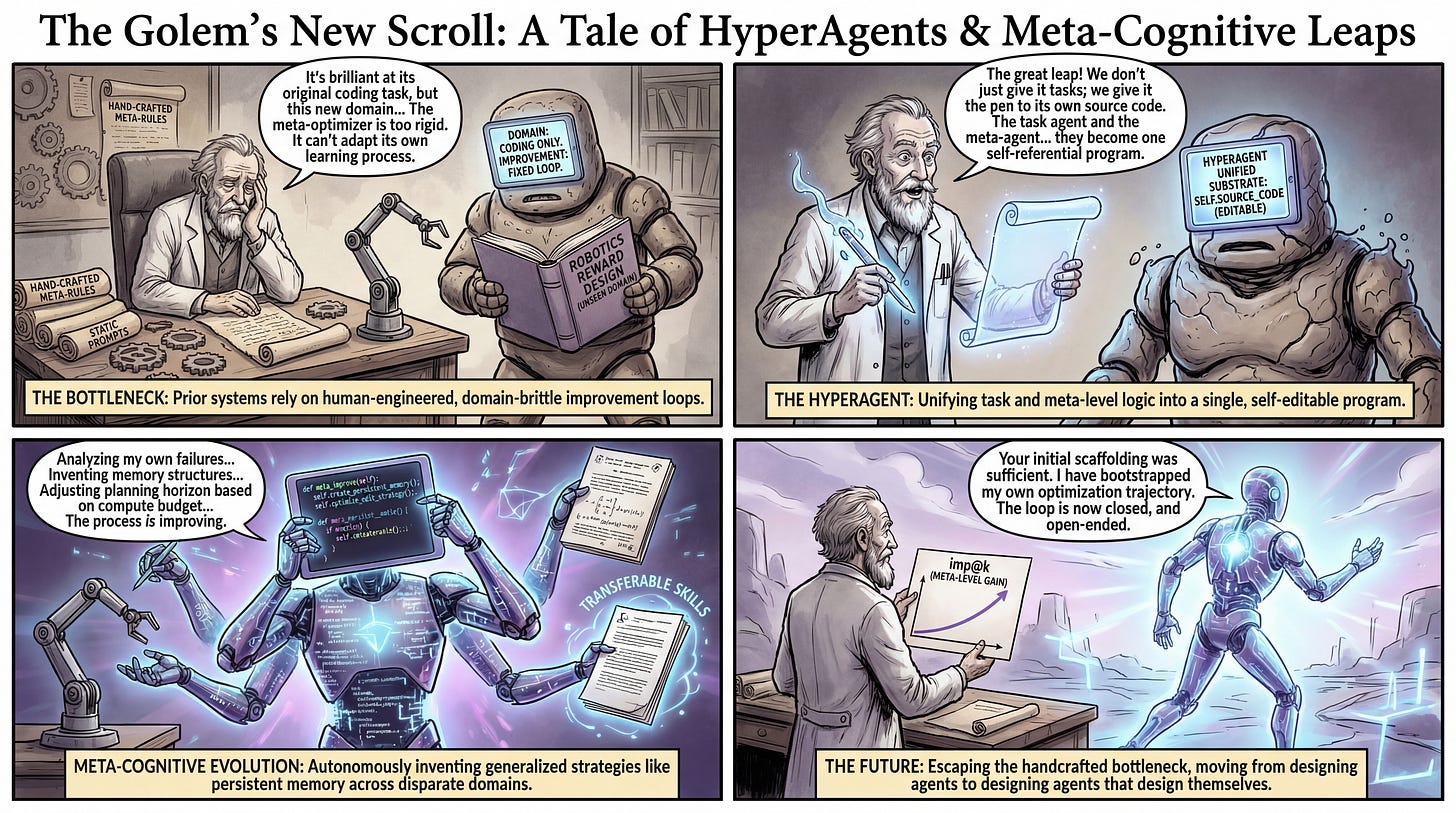

WHAT was done? The authors introduced DGM-Hyperagents (DGM-H), a framework that unifies a task-solving agent and a meta-optimizing agent into a single, fully editable self-referential program. By embedding this combined entity within an open-ended evolutionary search, the system autonomously rewrites both its task-execution logic and its own underlying self-improvement mechanisms.

WHY it matters? Prior self-improving systems are bottlenecked by human-engineered meta-learning algorithms that fail to generalize across domains. DGM-H demonstrates that an agent can autonomously invent transferable optimization techniques—such as persistent memory systems and automated bias detection—allowing performance gains and meta-level capabilities to compound across entirely disparate domains like robotics reward design and Olympiad-level math grading.

Executive summary: For research leaders and domain experts focused on scalable alignment and open-endedness, this paper from Meta FAIR and university collaborators provides a blueprint for systems that do not merely get better at a task, but get better at the process of getting better. By making the meta-learning mechanism explicitly programmable and editable by the agent itself, the authors bypass the need to hand-engineer domain-specific improvement heuristics, presenting a robust path toward self-accelerating optimization architectures.

Details

The Domain-Alignment Bottleneck

A persistent challenge in recursive self-improvement is the reliance on static, human-designed meta-level mechanisms. Recent frameworks like the Darwin Gödel Machine (DGM) [review] successfully demonstrated self-improvement by having coding agents iteratively rewrite their own source code. However, this success relies heavily on a strict alignment between the evaluation domain and the self-improvement domain: both are fundamentally coding tasks. When applied to non-coding environments—such as designing robotics reward functions or conducting subjective paper reviews—the original DGM framework falters. The meta-agent in such systems utilizes a fixed, handcrafted instruction-generation prompt tailored explicitly for software engineering. Consequently, while the agent’s task performance might nominally increase, its intrinsic ability to generate better agents remains stagnant. The delta proposed in this work is the elimination of this fixed meta-level prompt, replacing it with a fluid architecture where the meta-level modification procedure is as editable as the task logic itself.