Learning is Forgetting: LLM Training As Lossy Compression

Authors: Henry C. Conklin, Tom Hosking, Tan Yi-Chern, Julian Gold, Jonathan D. Cohen, Thomas L. Griffiths, Max Bartolo, Seraphina Goldfarb-Tarrant

Paper: https://arxiv.org/abs/2604.07569v1

Code: https://github.com/hcoxec/soft_h

TL;DR

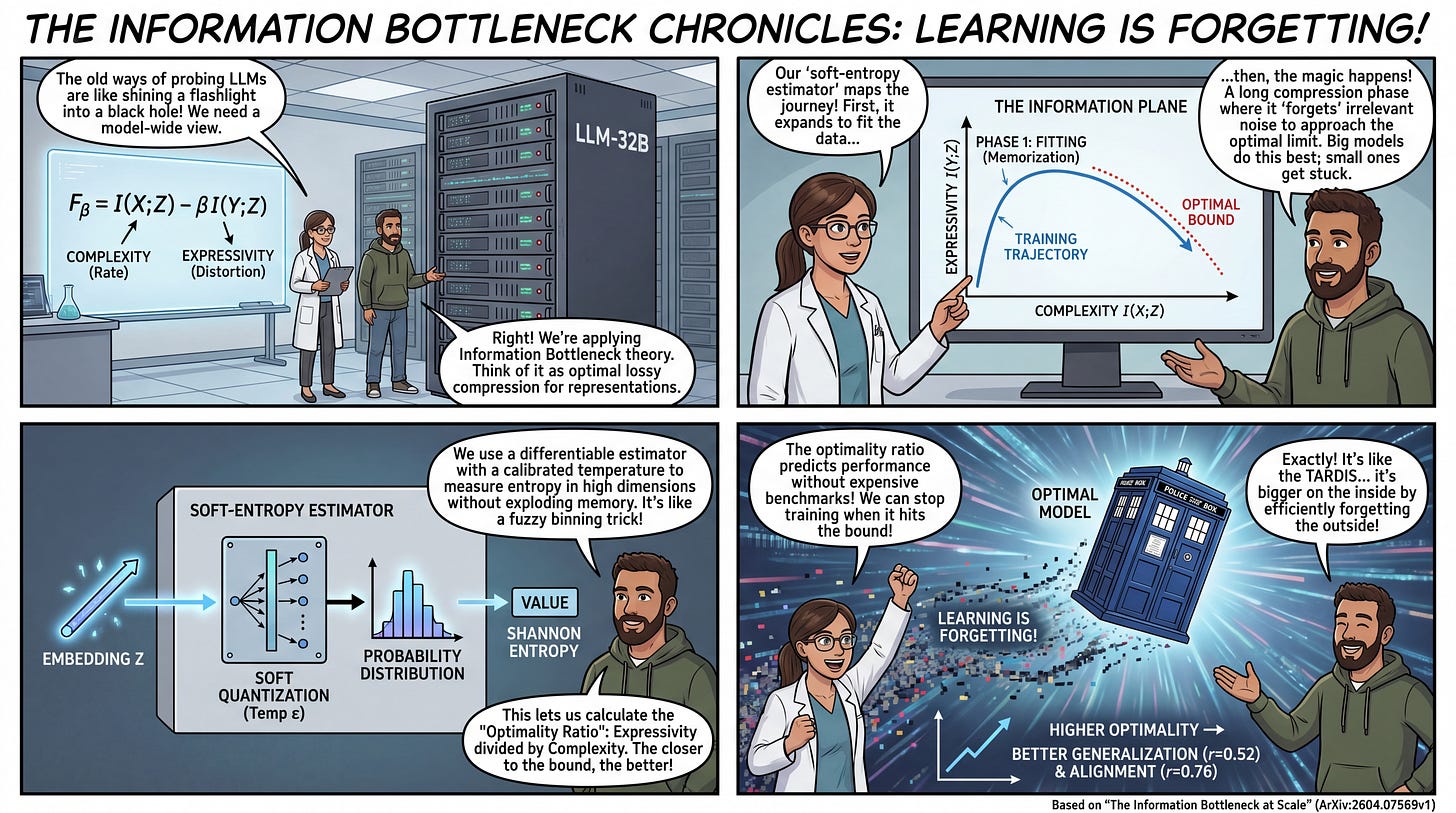

WHAT was done? Researchers from Princeton University and Cohere successfully applied Information Bottleneck (IB) theory to large language models of up to 32 billion parameters. By introducing a differentiable “soft-entropy estimator,” they mapped the pre-training trajectories of large transformers onto the information plane, revealing that training follows a distinct two-phase process: an initial expansion of representations to fit target labels, followed by a prolonged compression phase where irrelevant input data is forgotten.

WHY it matters? This work provides a holistic, model-wide alternative to mechanistic interpretability. It demonstrates that the degree to which a model approaches the optimal limit of lossy compression strictly predicts its performance on complex downstream benchmarks (r=0.52) and human preference alignment (r=0.76). Consequently, this introduces a viable path to use unsupervised information-theoretic metrics for early stopping and model selection, drastically reducing reliance on compute-heavy, task-specific evaluation suites.

Details

The Interpretability Scale Bottleneck

Understanding exactly how deep neural networks map inputs to representations has long been divided between two paradigms. On one side, behavioral probing treats models as black boxes, measuring output characteristics rather than the representational structures driving them. On the other side, mechanistic interpretability zeroes in on distinct circuits, attention heads, or singular neurons. While valuable, complex distributed systems cannot be entirely explained by enumerating their sub-components.

A more theoretical approach to representation learning is rooted in Rate Distortion Theory. Prior work, such as Opening the black box of deep neural networks via information, successfully modeled deep learning as an optimization of the Information Bottleneck, wherein networks first memorize and then compress data. However, this empirical validation was largely confined to small multi-layer perceptrons trained on MNIST. Scaling information-theoretic measurements to generative models trained on trillions of tokens hit a mathematical wall: accurately computing Shannon entropy on continuous, high-dimensional manifolds using traditional binning or k-means clustering requires caching states that far exceed modern memory limits. The authors of the current paper bypassed this bottleneck by introducing a differentiable approach to entropy estimation, successfully mapping the internal dynamics of production-scale architectures.