Memory Intelligence Agent

Authors: Jingyang Qiao, Weicheng Meng, Yu Cheng, Zhihang Lin, Zhizhong Zhang, Xin Tan, Jingyu Gong, Kun Shao, Yuan Xie

Paper: https://arxiv.org/abs/2604.04503v2

Code: https://github.com/ECNU-SII/MIA

Model: https://huggingface.co/LightningCreeper/MIA

TL;DR

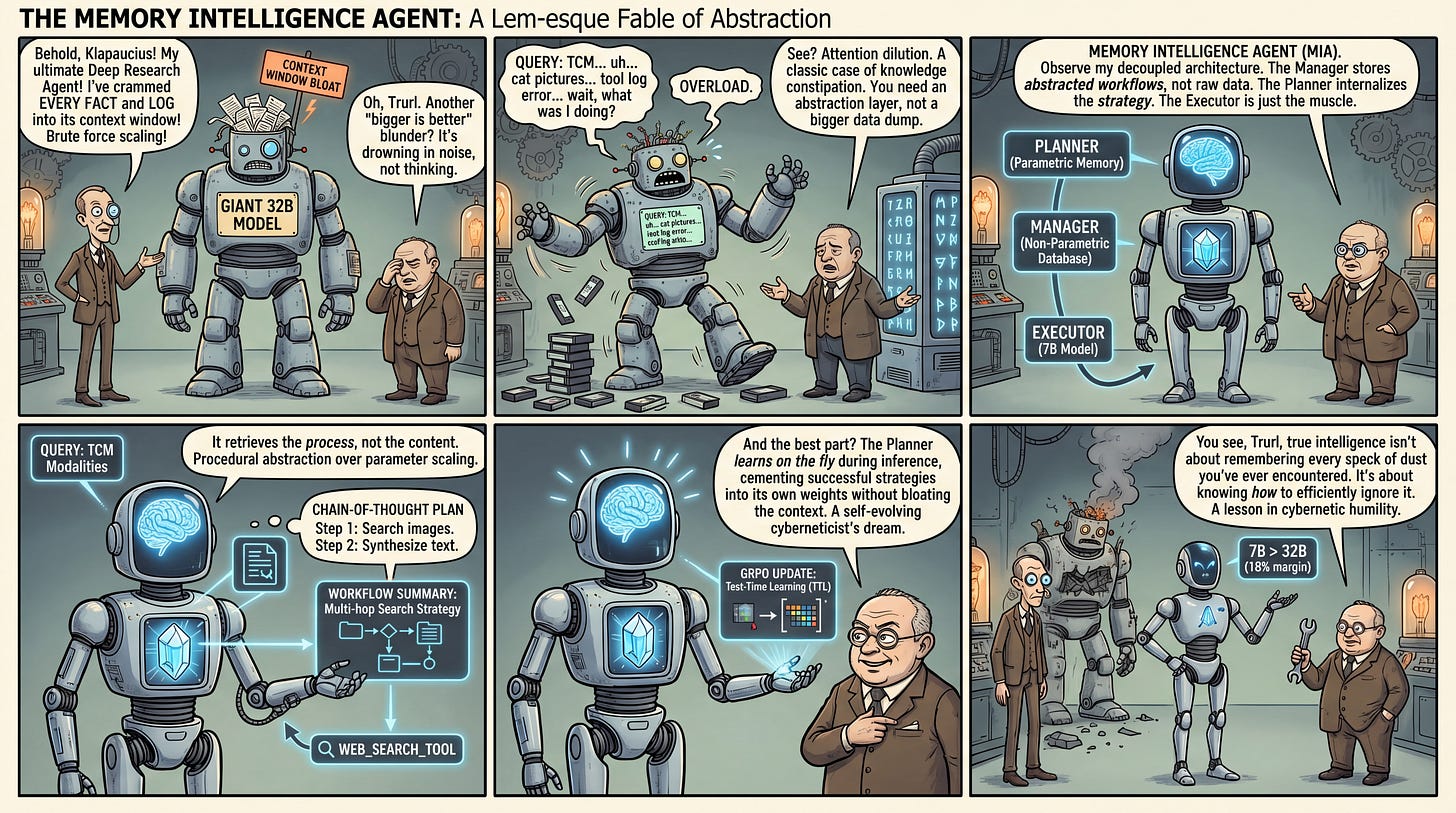

WHAT was done? The authors propose the Memory Intelligence Agent (MIA), a framework that restructures autonomous agent reasoning into a decoupled Manager-Planner-Executor architecture. It transitions from retrieving factual knowledge to internalizing procedural search strategies by combining an explicit non-parametric memory buffer with continuous parametric updates via reinforcement learning, even during inference (Test-Time Learning).

WHY it matters? This work empirically demonstrates that intelligent memory management and strategic abstraction can bridge the performance gap between small and large models. By using a 7B-parameter Executor to outperform a 32B-parameter model by an 18% margin, MIA establishes that internalizing the “how” of problem-solving is more computationally efficient and scalable than simply expanding context windows or scaling raw model parameters.

Executive summary: Modern deep research agents frequently suffer from memory bloat and attention dilution when processing extensive execution histories. The MIA framework addresses this by compressing raw interaction traces into high-level workflow summaries, which are then used to dynamically update a dedicated planning agent via an alternating reinforcement learning paradigm. For AI strategists and system architects, this signals a shift toward self-evolving, unsupervised agent architectures where learning continuous task-specific procedures during inference yields superior returns compared to static, knowledge-heavy context retrieval.

Details

The Context Trap in Deep Research Agents

The pursuit of capable deep research agents is currently bottlenecked by how these systems manage historical experience. Existing frameworks default to expanding the context window, effectively dumping raw execution traces, tool logs, and factual documents into the model’s prompt. This approach predictably leads to severe attention dilution, computational bloat, and the introduction of irrelevant noise that degrades complex reasoning. The researchers behind this work pivot away from this knowledge-centric paradigm, focusing instead on procedural memory. By isolating the process of how a successful result is obtained—such as search trajectories and strategic pivots—from the factual content of the result itself, the MIA framework establishes a structural delta from standard retrieval-augmented generation.

Theoretical Substrate: Decoupling Procedural Memory

The foundation of MIA rests on a strict demarcation between non-parametric and parametric memory, utilizing a three-node Manager-Planner-Executor topology. Non-parametric memory is conceptualized as an explicit database managed by the Manager agent. Instead of storing raw text, it houses abstracted, structured “workflow summaries” embedded in a unified vector space. Conversely, parametric memory represents the latent search strategies baked directly into the weight matrices of the Planner agent. By synchronously bridging these two states, the framework guarantees that historical experiences are used as an explicit contrastive reference for immediate contextual awareness, while also driving long-term consolidation into the model’s intrinsic reasoning capabilities.