Seeing, Listening, Remembering, and Reasoning: A Multimodal Agent with Long-Term Memory

Authors: Lin Long, Yichen He, Wentao Ye, Yiyuan Pan, Yuan Lin, Hang Li, Junbo Zhao, Wei Li

Paper: https://arxiv.org/abs/2508.09736

Code: https://github.com/bytedance-seed/m3-agent

TL;DR

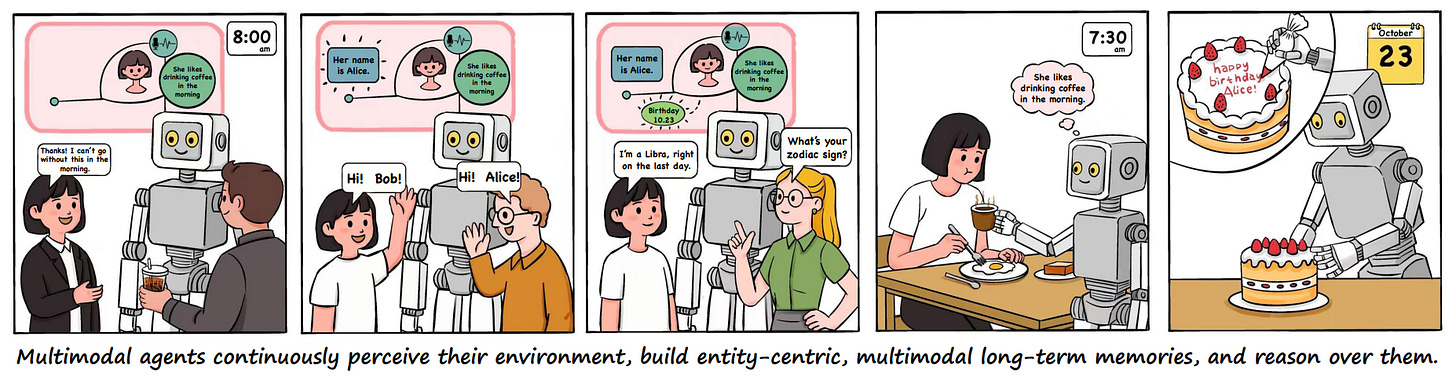

WHAT was done? The paper introduces M3-Agent, a novel framework for multimodal AI agents equipped with a human-like long-term memory. This agent continuously processes real-time video and audio streams to build and update two types of memory: episodic (for specific events) and semantic (for accumulated world knowledge). The memory is structured as an entity-centric, multimodal graph, enabling consistent tracking of entities like people across different modalities. The agent employs reinforcement learning (DAPO, https://arxiv.org/abs/2503.14476) to perform multi-turn, iterative reasoning over this memory to accomplish complex tasks. To evaluate these capabilities, the authors also developed M3-Bench, a new long-video question-answering benchmark featuring real-world videos and challenging questions focused on memory-based reasoning.

WHY it matters? This work marks a significant step toward creating more autonomous and cognitively sophisticated AI agents. By moving beyond the limitations of fixed context windows and simple retrieval, M3-Agent tackles the core challenges of continuous learning, long-term knowledge retention, and consistent reasoning in dynamic environments. While many agent frameworks use simple vector lookups for "memory," this approach often lacks consistency. M3-Agent's structured, graph-based memory actively builds relationships between entities, providing a robust foundation for next-generation applications like truly helpful household robots, advanced personal assistants, and more intuitive human-AI collaboration systems.

Details

Introduction: From Fleeting Perception to Lasting Memory

A fundamental goal in AI is to create agents that can interact with the world in a manner similar to humans—perceiving, learning, and reasoning over time. A key component of this intelligence is long-term memory. While recent large models have shown remarkable capabilities, they often operate with a limited, fleeting context. They can process what's immediately in front of them but struggle to build a persistent, evolving understanding of the world from a continuous stream of experiences.

The paper "Seeing, Listening, Remembering, and Reasoning: A Multimodal Agent with Long-Term Memory" introduces M3-Agent, a framework that directly confronts this challenge. It presents a novel architecture designed to mimic human cognitive processes, enabling an agent to not only see and listen but to truly remember and reason over its accumulated knowledge.