Speculative Speculative Decoding

Authors: Tanishq Kumar, Tri Dao, Avner May

Paper: https://arxiv.org/abs/2603.03251

Code: https://github.com/tanishqkumar/ssd

TL;DR

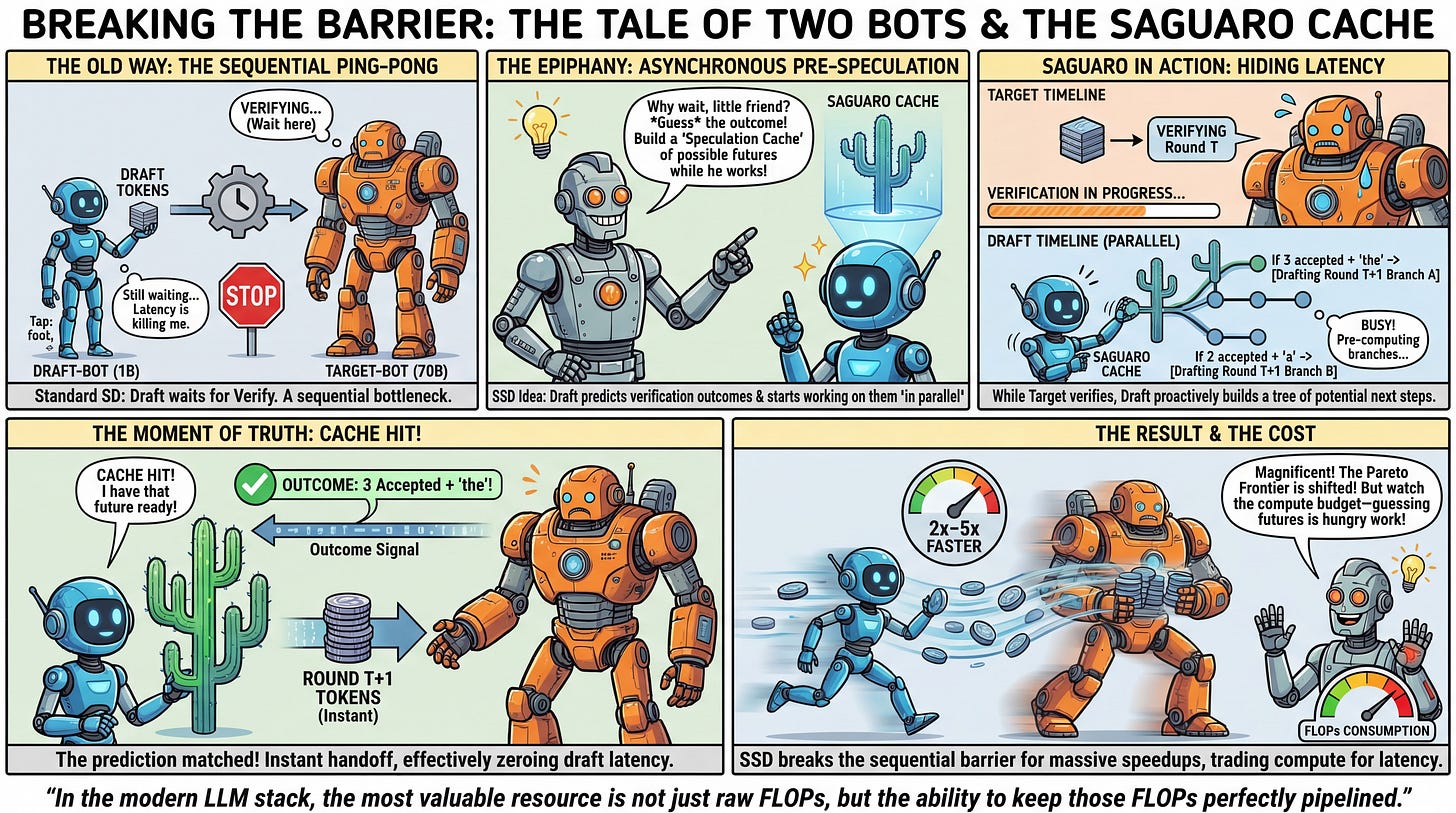

WHAT was done? The authors introduce Speculative Speculative Decoding (SSD) and its optimized implementation, Saguaro. SSD breaks the sequential dependency between drafting and verification in standard speculative decoding by having the draft model predict verification outcomes and proactively generate speculations for those outcomes in parallel with the target model’s verification pass.

WHY it matters? By effectively hiding drafting latency behind verification compute, SSD achieves up to a 2x speedup over optimized speculative decoding baselines and up to a 5x speedup over standard autoregressive decoding. Crucially, it pushes the strict latency-throughput Pareto frontier outward, demonstrating that speculative methods can be made more compute-efficient per device through aggressive asynchronous parallelism.

Details

The Verification Bottleneck in Speculative Decoding

Autoregressive language model decoding is fundamentally constrained by memory bandwidth, generating tokens sequentially. While Speculative Decoding (SD) elegantly mitigates this by utilizing a fast draft model to propose tokens for a larger target model to verify in parallel, it introduces a new sequential bottleneck. In standard SD, the draft model must wait idly for the target model to complete its verification forward pass before it can begin proposing the next sequence of tokens. This alternating ping-pong limits the theoretical maximum speedup. The researchers from Stanford, Princeton, and Together AI propose a framework that asks a simple structural question: what if the draft model never stops? The resulting architecture shifts from a sequential draft-verify loop to a continuous, asynchronous pipeline where the draft model utilizes verification time to “pre-speculate” the most probable outcomes of the ongoing verification step.