Thoughtbubbles: an Unsupervised Method for Parallel Thinking in Latent Space

Authors: Houjun Liu, Shikhar Murty, Christopher D. Manning, Róbert Csordás

Paper: https://arxiv.org/abs/2510.00219

Code: https://github.com/stanfordnlp/thoughtbubbles

TL;DR

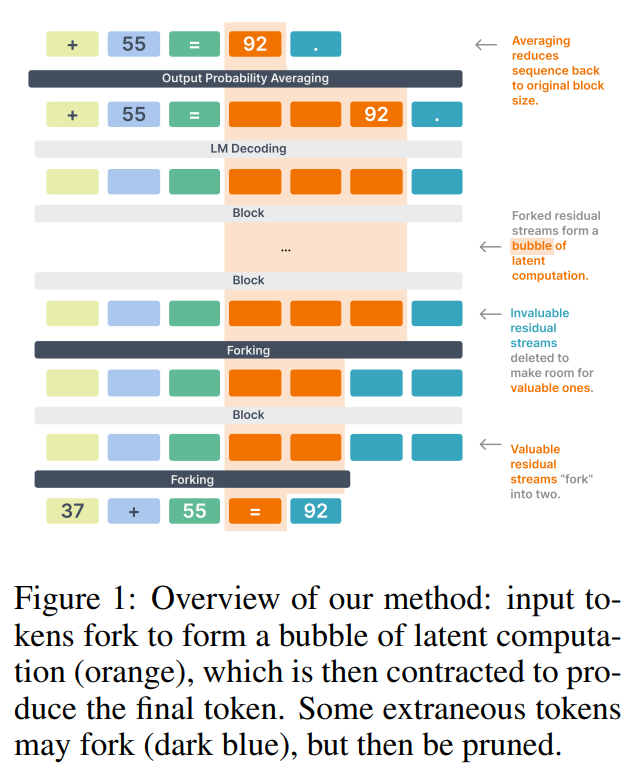

WHAT was done? The paper introduces Thoughtbubbles, a novel Transformer architecture that learns to dynamically allocate parallel computation in its latent space. Instead of generating explicit text like in Chain-of-Thought, this model can “fork” (clone) or “delete” the latent residual streams of specific tokens. Tokens that require more processing form temporary “bubbles” of parallel computation within the network, which are later merged to produce a final output (Figure 1).

WHY it matters? This is the first known method to achieve adaptive, parallel thinking behavior in a completely unsupervised manner, using only the standard language modeling loss during pretraining. It shifts the paradigm of adaptive computation from explicit, serial text generation to implicit, parallel latent operations. This approach not only provides a more native and flexible way for models to “think” but also demonstrates remarkable efficiency. Empirically, Thoughtbubbles outperforms both parameter-matched and computation-matched baselines, with a 319M parameter model achieving better perplexity than a 772M standard Transformer, showcasing a new and highly effective path for scaling model reasoning capabilities.

Details

The Challenge: Fixed Computation Budgets

Despite their success, standard Transformer architectures are constrained by a fixed computational budget. Every token receives the same amount of processing, regardless of its complexity, which limits their ability to solve intricate, multi-step problems. While methods like Chain-of-Thought (CoT) have emerged as powerful workarounds—prompting models to generate explicit reasoning steps—they are inherently serial, can’t be applied during general pretraining, and rely on natural language verbalization. The question has remained: can a model learn to think more deeply on its own, within its latent space, without explicit instructions?