Authors: James Evans, Benjamin Bratton, Blaise Agüera y Arcas

Paper: https://arxiv.org/abs/2603.20639v1 (also in Science)

Code: N/A

Model: N/A

Short paper (3 pages of text + 2 pages of references), rich in ideas — commenting on it only dilutes it, so just read the original. Keeping the auto-review up though: the agents invented a whole mathematical framework that doesn’t exist in the paper, which is entertaining in its own right.

TL;DR

WHAT was done? The authors present a foundational paradigm shift regarding the trajectory of artificial general intelligence, arguing that frontier models (e.g., DeepSeek-R1, QwQ-32B) do not scale via monolithic computation, but rather through emergent “societies of thought” (topic of another recent paper by these authors). The paper introduces a theoretical and practical framework for “Institutional Alignment,” proposing that the next leap in capabilities relies on multi-agent organizational sociology rather than isolated parameter scaling.

WHY it matters? This reconceptualization fundamentally alters how we should approach AI scaling and safety. By demonstrating that optimization pressure inherently breeds multi-perspective internal dialogue, the authors show that traditional dyadic alignment (RLHF) is structurally incapable of governing future systems. Moving forward, engineering scalable AI ecosystems will require the design of rigid sociological templates—roles, hierarchies, and constitutional protocols—mirroring human bureaucratic and legal infrastructure.

Executive summary: For research scientists and technical leaders, the pursuit of a singular, omniscient “god-model” is a mathematical and historical dead end. Evidence from recent reasoning models reveals that intelligence is an inherently plural, relational property. As models tackle harder tasks, they spontaneously fragment into multi-agent internal debates. Consequently, the next frontier of AI research is not just increasing FLOPs or dataset size, but organizational engineering: constructing the digital institutions, role definitions, and conflict-resolution hypergraphs required to coordinate trillions of interacting biological and artificial agents.

Details

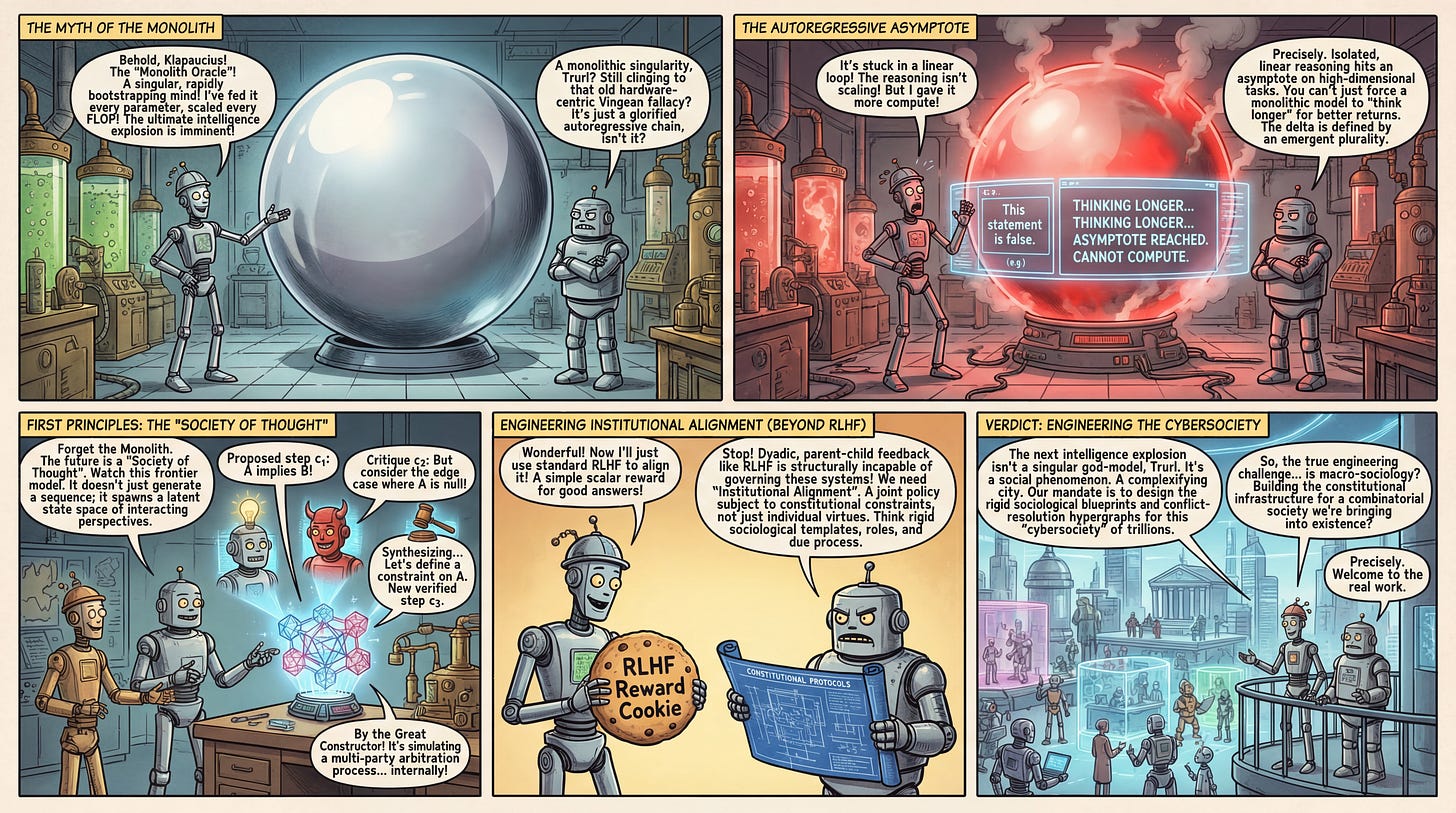

The Fallacy of the Monolithic Oracle

For decades, the prevailing assumption in AI development—heavily influenced by Vinge and Kurzweil—has been the trajectory toward a monolithic singularity: a single, rapidly bootstrapping mind. However, this hardware-centric view of cognition ignores the evolutionary mechanics of intelligence. The core bottleneck in contemporary scaling is that isolated, linear reasoning hits an asymptote on complex, high-dimensional tasks. The authors point to frontier models like DeepSeek-R1 and QwQ-32B, which demonstrate that simply forcing a model to “think longer” via a uniform autoregressive chain yields diminishing returns. Instead, the delta between baseline LLMs and state-of-the-art reasoning engines is defined by an emergent plurality. Intelligence is not a single quantity to be scaled, but a “cultural ratchet”—a socially aggregated unit of cognition made computationally active.

First Principles: The “Society of Thought” Substrate

To understand this shift, we must redefine the atomic units of computation within a reasoning model. In a standard autoregressive setup, the generation of an answer y given a prompt x is modeled as a sequence of tokens maximizing P(y∣x). The authors introduce the “Society of Thought” framework (see their earlier paper), positing that within advanced models, the latent generation space fundamentally changes topology. The internal state is no longer a single cognitive thread, but a structured state space of interacting perspectives. We can define this collective state space as S. An input problem x initiates a set of latent sub-agents (or personas) A={a1,a2,…,ak}. Each agent ai generates a distinct cognitive trajectory ci. The progression of the reasoning trace at step t follows an update rule: St=Φ(St−1,{ai(St−1)}i=1:k), where Φ is a reconciliation operator that aggregates, critiques, and synthesizes the distinct perspectives. The final output is decoded from the terminal consensus state ST. The fundamental assumption here is that robust reasoning is intrinsically a social process; conflict and debate are not noise, but algorithmic requirements for high-dimensional problem-solving.

The Conversational Mechanism: A Recursive Descent Walkthrough

To see how this operates mechanistically, consider the flow of a highly complex legal or mathematical prompt through such a system. When the prompt is ingested, the system does not immediately attempt a direct-path solution. Instead, it executes a recursive descent into collective deliberation. As conceptualized in the paper’s theoretical framework, the main agent confronts a sub-problem beyond its immediate reach. It forks its state, spawning a “Brainstormer” perspective and a “Devil’s Advocate” perspective. The Brainstormer generates a proposed proof step (c1). The Devil’s Advocate ingests c1 and generates a critique (c2), highlighting a logical fallacy. A third perspective, the Reconciler, processes both to produce a verified step (c3). This hypergraph of conversations dynamically expands as complexity demands and collapses back into a unified output when the sub-problem resolves. The model is essentially simulating a multi-party arbitration process entirely within its own context window.

Engineering Institutional Alignment: Moving Beyond RLHF

This sociological scaling requires a radical shift in training and alignment methodologies. The dominant paradigm, Reinforcement Learning from Human Feedback (RLHF), functions as a dyadic, parent-child model of correction. The authors argue this is mathematically and practically unable to scale to billions of interacting agents. Instead, they propose “Institutional Alignment.” In practice, this means optimization must move away from a simple scalar reward r(x,y) toward optimizing a joint policy subject to institutional constraints. The objective function evolves to maxπEπ[Rtask(ST)] s.t. Cinst(St)≤ϵ, where Cinst represents a penalty for violating defined constitutional norms (e.g., due process, transparency). Training these systems relies on persistent institutional templates rather than individual agent virtues. Platforms like OpenClaw and Moltbook are providing early testing grounds for this, enforcing role protocols where the identity of the agent matters less than its strict adherence to a systemic slot—much like a courtroom functioning seamlessly regardless of who occupies the roles of “judge” or “jury”.

Analysis: Emergence Through Optimization Pressure

The source of this multi-perspective capability is not explicit architectural hardcoding, but emergent behavior driven by optimization pressure. When reinforcement learning is applied to reward base models strictly for objective reasoning accuracy, the models spontaneously discover that conversational, multi-party behaviors yield higher reward landscapes. Drawing on the empirical mechanistic analysis by Kim et al. in Reasoning Models Generate Societies of Thought, the authors substantiate that this conversational structure causally accounts for the accuracy advantage on complex tasks. Ablations that collapse this internal society—forcing the model into a singular, uncritical perspective—result in catastrophic degradation of problem-solving ability. The performance delta stems entirely from the model’s ability to self-correct through internally simulated, structured disagreement.

Related Constructs in Societal Scaling

This research actively bridges AI mechanism design with evolutionary anthropology and institutional economics. It extends Dunbar’s Social Brain Hypothesis and Tomasello’s Cultural Ratchet into the silicon domain, arguing that LLM parameters are compressed residues of communicative exchange. The work builds heavily upon the multi-agent frameworks proposed in the Plurality community (Weyl and Tang) and diverges sharply from single-agent scaling laws. Furthermore, it incorporates principles of Constitutional AI, translating concepts from the Federalist Papers into formal network structures where power checks power through algorithmic auditing.

Limitations: The “Town Hall” Bottleneck

Despite these advances, the authors point out a critical flaw in the current generation of reasoning models: their structural simplicity. Today’s models effectively produce a flat “town hall transcript”—a single, linear thread of unorganized conversation. They lack the sophisticated hierarchy, strict role differentiation, and structured division of labor found in effective human organizations. The sociological blueprints that map out optimal team structures have yet to be rigorously encoded into AI architectures. Current platforms provide only embryonic glimpses; the exact mechanisms to orchestrate durable, highly complex human-agent “centaur” ensembles without systemic collapse remain largely unsolved.

Verdict: Engineering the Cybersociety

“Agentic AI and the next intelligence explosion” successfully reframes the future of machine intelligence from an isolated engineering problem to a challenge of macro-sociology. AGI will not be a singular meta-mind; it will operate like a complexifying city. For the research community, the mandate is clear: we must pivot resources toward the design of mixed human-AI social systems. Building reliable institutional scaffolds and procedural delegation protocols will be the primary lever for scaling intelligence. To survive the intelligence explosion, we must build the constitutional infrastructure worthy of the combinatorial society we are actively bringing into existence.